How to Optimize Your Store If You Can't Run A/B Tests

An optimization approach for low volume stores

Hey I’m Shane, welcome to another issue of The CRO Weekly where each week I explore how to build a high converting Ecommerce store. If you’re not subscribed you can do so here:

If your store has less than 1,000 orders per month you probably can’t run A/B tests.

Does that mean low-volume stores can’t do conversion rate optimization? Not necessarily - we just need to change the way we work.

In this post I’m going to give a basic overview of why we can’t run A/B tests on low-volume stores before diving into how to run optimization campaigns.

Let’s jump right in.

The Statistical Requirements to Run A/B Tests

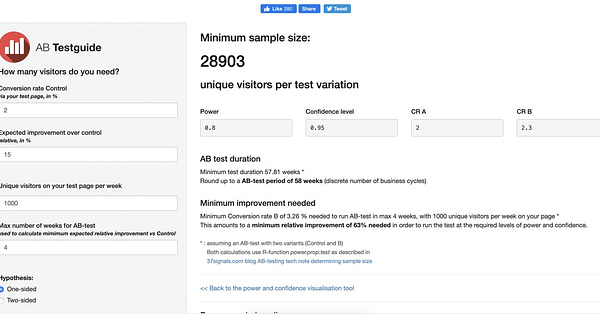

Understanding the statistics behind the A/B test can be complicated. Luckily, people have built open-source calculators that give us the answers we need. The tool we’ll be using today is the A/B Test Size Calculator.

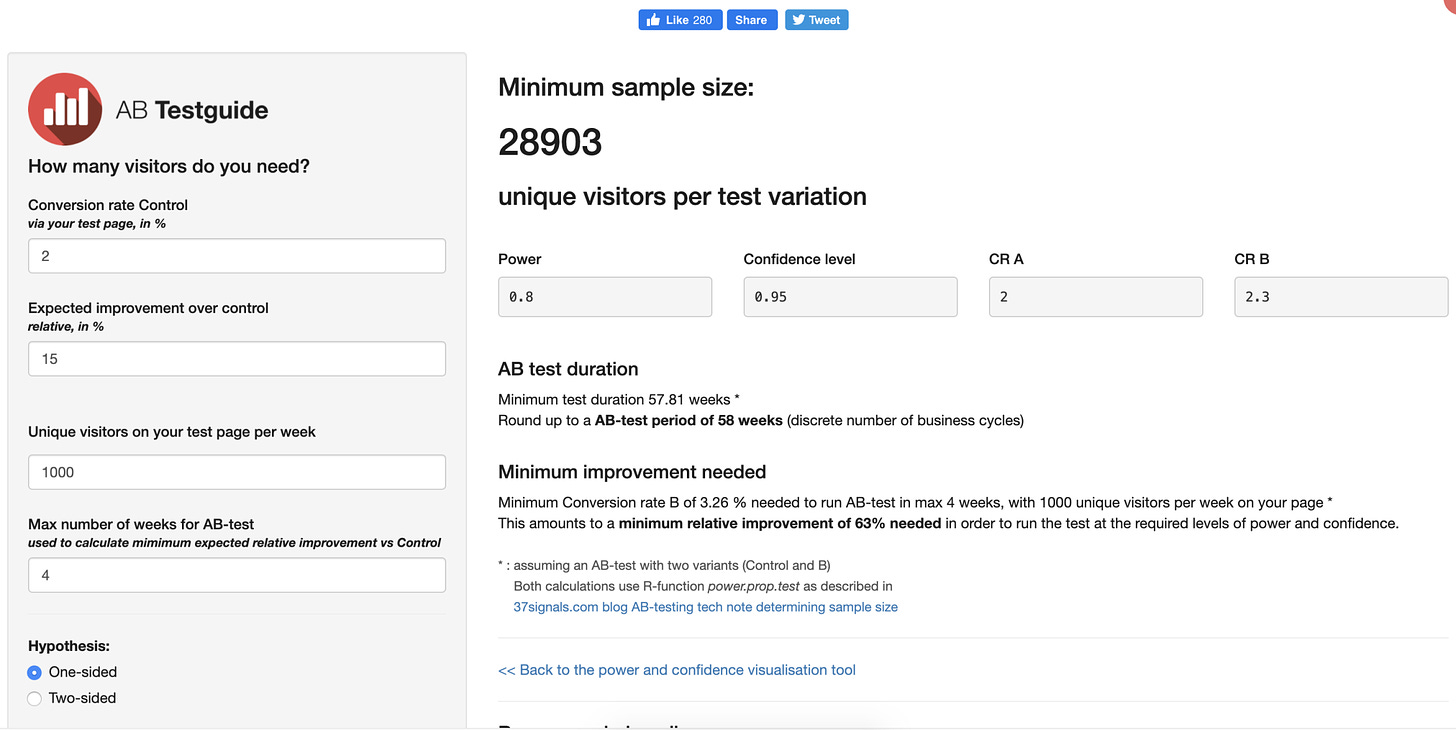

When you go to the website this is what you’ll see:

On the left side we have our initial inputs:

Let’s break down each of these inputs:

Conversion Rate Control

This is your average conversion rate on your store.

Expected Improvement Over Control

If we were to run an A/B test, this is the difference in performance between A (current site) and B (the new update). This is important because the magnitude of the change indicates how likely it is to be a *true* improvement. A 50% improvement is obvious, where a 1% improvement could really be no improvement at all.

Unique visitors on your test page per week

This helps us determine how long it will take to get the required sample size for statistical significance. We will learn more about this shortly.

Max number of weeks for AB-test

This measure is simply a goal. Typically, we want to be able to run an AB test in 4 weeks or less. Anything more and the number of tests you can run per year starts to drop considerably. We will see later how this number is used.

For our example, we’re going to assume:

2% - Conversion rate control

15% - Expected Improvement over control

10,000 - Unique visitors on your test page per week

4 - Max number of weeks for AB-test

With these assumptions, we currently make 200 sales per month (10,000 * .02).

There is another set of inputs we can change, but we won’t. All you need to know is that 80% statistical power with a 95% confidence level is a solid measure of how accurate our data is.

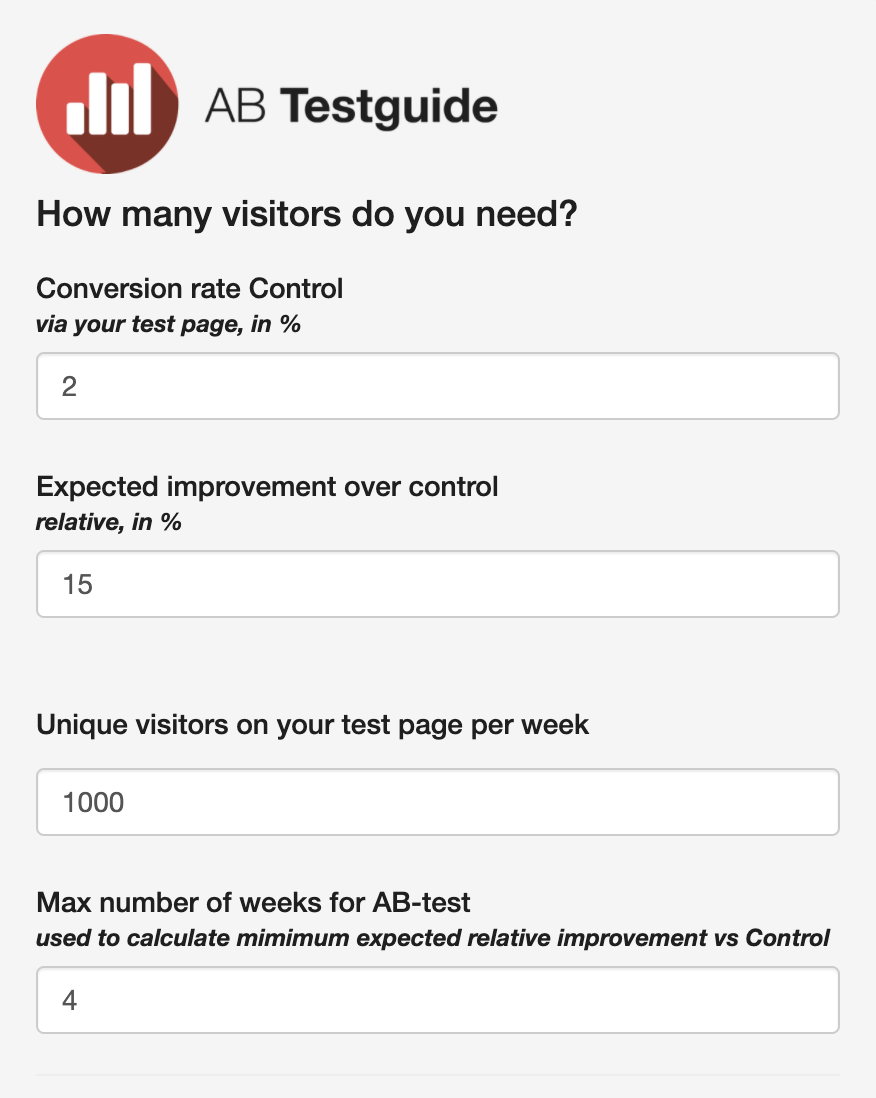

With these inputs, here are the results we get:

The results show us that our minimum sample size is 28,903 per variation. That’s the number of people we’ll need to see both A and B to get statistically significant results from it given a 15% increase in conversions from A to B.

Based on our Unique Visitors (10,000 per week) the calculator tells us that we will need to run a test for at least 5.78 weeks for enough people to see it. We don’t run tests for .78 weeks so we will round up to 6 weeks.

What does this tell us? Fundamentally, we can only run a new set of tests every 6 weeks, or around 1.5 months. That doesn’t sound too bad right?

Well, that assumes the changes we made have a 15% impact on conversions. What if what we do only has a 7% impact? That’s still a good improvement, right?

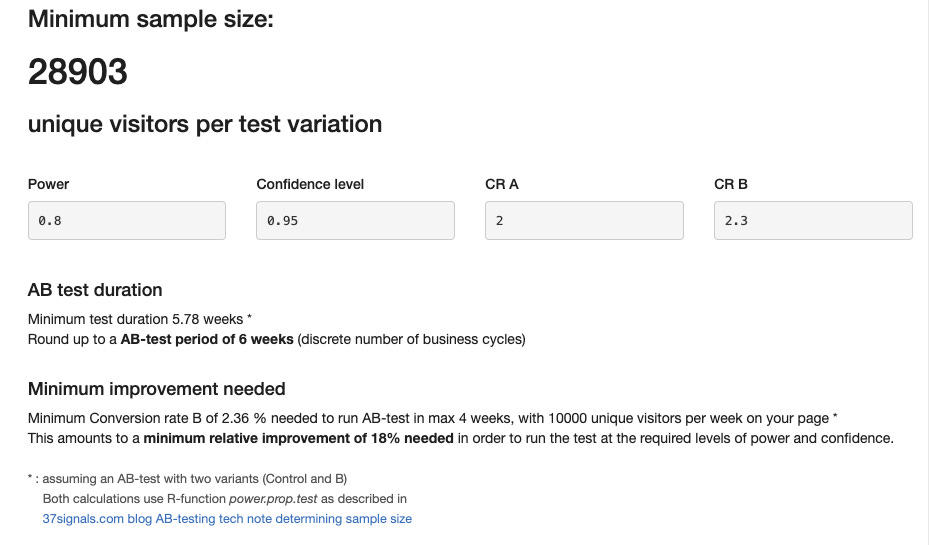

Here are the results if we change our ‘expected improvement over control’ to 7%:

Our minimum sample size is now 127,886 per variation and our minimum test duration is now 25.58 weeks or around 6 months. That’s extremely inefficient. This is why small volume sites require a different strategy.

How to Optimize Without A/B Tests

The typical CRO process goes like this:

Research

Develop hypotheses (opportunities to improve)

Design experiments to test hypotheses

Run experiments

Analyze results

Document Learnings

Back to step 2

This works because you work through these cycles on an ongoing basis. Finding new ideas, implementing new tests and analyzing the results of your changes.

Without testing, the best we can do is simple before & after comparisons. This is why we have to change our entire process.

Instead of a continual process, we need a staggered approach.

Month 1: Heavy Research, planning

Month 2: Design, Build, and Implement X(5, 10, 15) new changes all at once.

Month 3: Heavy research, planning. Analyze the results from last month's changes.

Month 4: Design, Build, and Implement X new changes

The idea here is that we implement a large number of changes all at once and measure the impact of all of them together. We do a large number of changes because the bigger the change we make, the bigger change in customer behavior (i.e conversion rate) we may expect.

Unfortunately some of the individual changes may increase CR while others decrease but that’s something we’ll have to accept.

Let’s take a deeper look at this breakdown.

Imagine you’re starting a new optimization campaign on January 1st. The plan would be to spend all of January doing in-depth research and developing hypotheses. I.e “If we remove the required account creation from the checkout process we will see an increase in conversions”.

These hypotheses can be categorized by different conversion factors like distractions, friction, motivation, clarity. That will allow us to develop a sort of grand hypothesis for each category. Something like “I believe implementing all of the changes that aim to reduce friction throughout the store will improve conversions”. We can then take all of the tasks we view as ‘removing friction from the site’ and combine them into one large project.

Doing the work

Then, in February, we want to actually do all of the work designing and implementing the changes. Though instead of releasing them as soon as they’re done we’ll wait until all of the changes are ready and deploy them on February 28th.

This allows us to split our analytics into two buckets. All data prior to February 28th, and data after February 28th. Then, we will make sure to not make any changes during the month of March. Instead, we will be planning, designing, and building a new large set of website changes that we can deploy on March 31st, repeating the process again.

That’s all for today’s issue. As always, if you enjoyed this issue please let me know on Twitter: