CRO Weekly #10: Stop wasting your time running AB tests like this

The other day I saw someone running an AB test.

Only 400 people had seen each variation. It had only been running for a single day. The difference in goal completion rates was around 10%.

Yet, the tester started making claims.

“Ah yea this makes sense because of X.”

“We expected Y to happen because of this change and looks like it is.”

“This looks promising.”

I can’t help but get frustrated. This is statistics after all. There’s no room for interpretation here. Either you’re running a test correctly or your results mean nothing.

Run an A/A test to see what I mean

A great way to learn the perils of improper testing is to run an A/A test. That means, set up an AB test but don’t make any changes. It sounds silly, but what you’ll learn is profound.

On day one, assuming you had 1k visitors, your results will probably look something like this:

Yes, even with the exact same page, you could see substantial differences in conversion rate. Just think about it by asking yourself the simple question:

What are the odds 6/10 people that decided to buy just happened to fall into one side of the test?

That seems highly probable, right? Let’s consider the impact.

With 10/500 visitors converting on A1, that’s a 2% conversion rate.

With 12/500 visitors converting on A2c that’s a 2.4% conversion rate.

A 20% increase! All for doing nothing!

Even worse, your AB testing software may even tell you this is ~statistically significant~.

The problem with statistical significance

Statistical significance is basically a measure of how likely it is that the impact you’re seeing is a result of chance. This is usually determined to be true when you have a P-value of less than 5%.

I’ll save you the boring math. Basically, the larger the difference is between your two results, the less likely it is to be left up to chance, and the lower your P-value. That is, the more ‘statistically significant’ it is.

The problem is, we’re only comparing results here. There is no mention of how long the test has been running for.

That’s why we need to look to another metric: statistical power.

Statistical power in 10 seconds

Again, I’ll save you the boring details. Statistical power is a measure of how likely it is that your P-value will be less than 5% based on how many people have gone through your test.

Essentially, it keeps statistical significance honest by tying it to your sample size. This is what’s missing from most tests. Even if you are measuring statistical significance, if you ignore statistical power a lot of your test results are likely wrong.

Okay, so what does that mean for you?

For every test you should make an assumption of its impact. For now, let’s assume the change you’re making will have a 20% increase on your conversion rate.

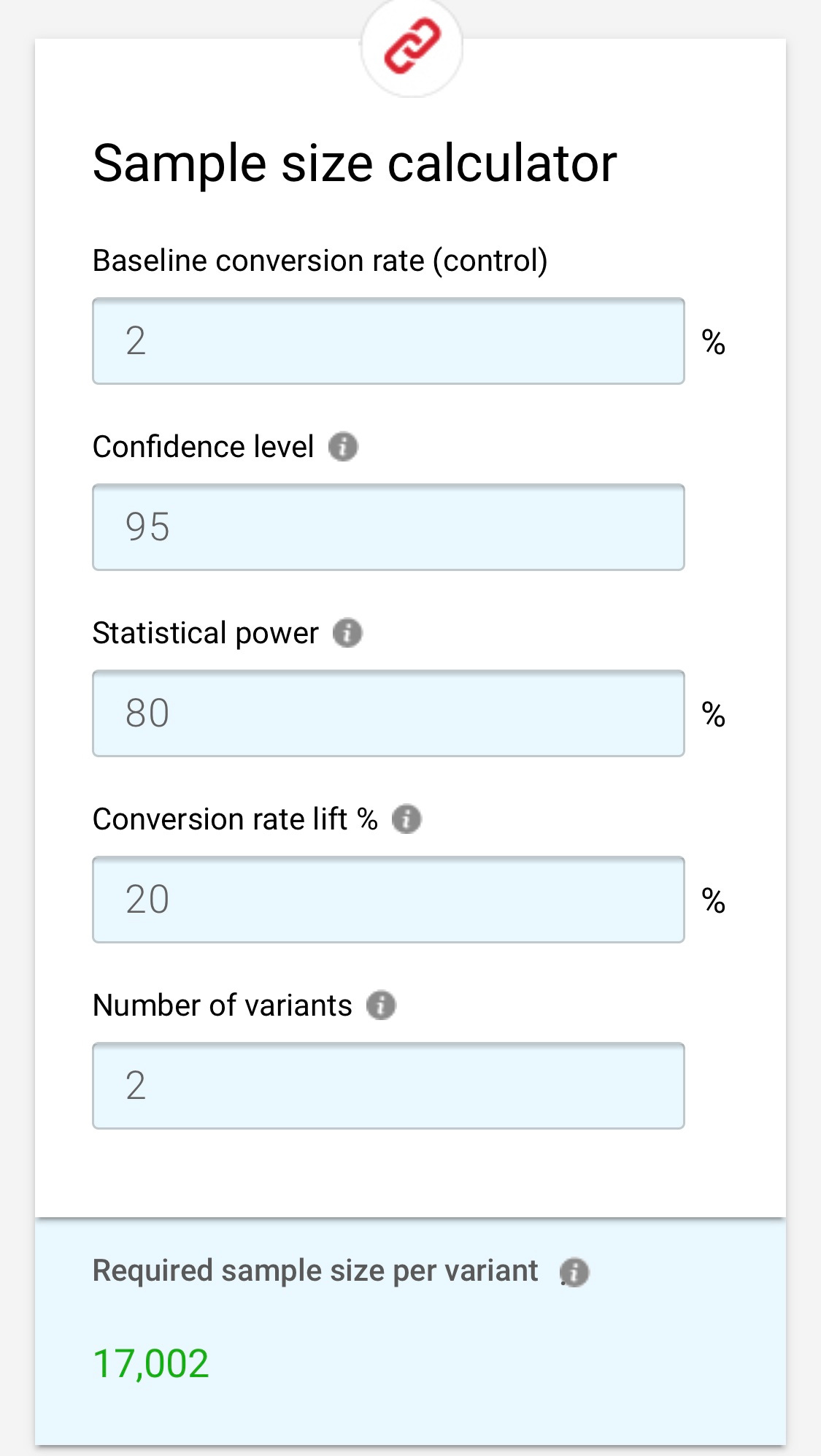

We can go over to this handy sample size calculator by CXL and plug in our numbers:

This does the math for us. If the change has a 20% impact on our CR, we can be 95% confident that this is a result of the change we made only after 17,002 people see each variant.

Yes, that is 34,004 people in total. If you’re worried about your tests, it gets worse. Our 20% impact was only an assumption.

Let’s say we go and check the test results after 34,004 people have seen it. Turns out, the test is only showing a 10% increase in CR.

Whatever, that’s still a good improvement, right? Sure, but let’s go back to the calculator:

To be confident our 10% impact is a result of the change we made, and not just chance, we need 64,917 people to go through each variant. Yes, that is 129,834 website visitors in total!

What You Should Do

For some of you these results may be disheartening. Most sites don’t even get 129,000 visitors in a quarter let alone a month. In the end, There’s two things you should take away from all of this:

First, use a sample size calculator to determine if you’re properly powering your tests. If you’re not, you’re at best not making any improvements and at worst, actively harming yourself.

Second, the less traffic you have the larger changes you need to test. Swapping out an image, changing your button color, or tweaking one line of copy all fall deep into that <10% impact zone. Redesigning the buy box on your PDP, changing your offer, or rewriting the copy for your primary landing page have the potential for a larger impact.

Maybe more important than both of these is, don’t pretend this stuff doesn’t matter. If you don’t have the traffic and conversion numbers to run a proper test, don’t.

That’s all folks.

Thanks for reading issue #10 of The CRO Weekly. If you loved it, hated it, or have questions about it, hit me up on Twitter @shanerostad.